Building UIs in Figma with hand movements

20/01/2022Since the release of the latest version of the MediaPipe handpose detection machine learning model that allows the detection of multiple hands, I've had in mind to try to use it to create UIs, and here's the result of a quick prototype built in a few hours!

Before starting this, I also came across 2 projects mixing TensorFlow.js and Figma, one by Anthony DiSpezio to turn gestures into emojis and one by Siddharth Ahuja to move Figma's canvas with hand gestures.

I had never made a Figma plugin before but decided to look into it to see if I could build one to design UIs using hand movements.

The first thing to know is that you can't test your plugins in the web version so you need to install the Desktop version while you're developing.

Then, even though you have access to some Web APIs in a plugin, access to the camera and microphone isn't allowed, for security reasons, so I had to figure out how to send the hand data to the plugin.

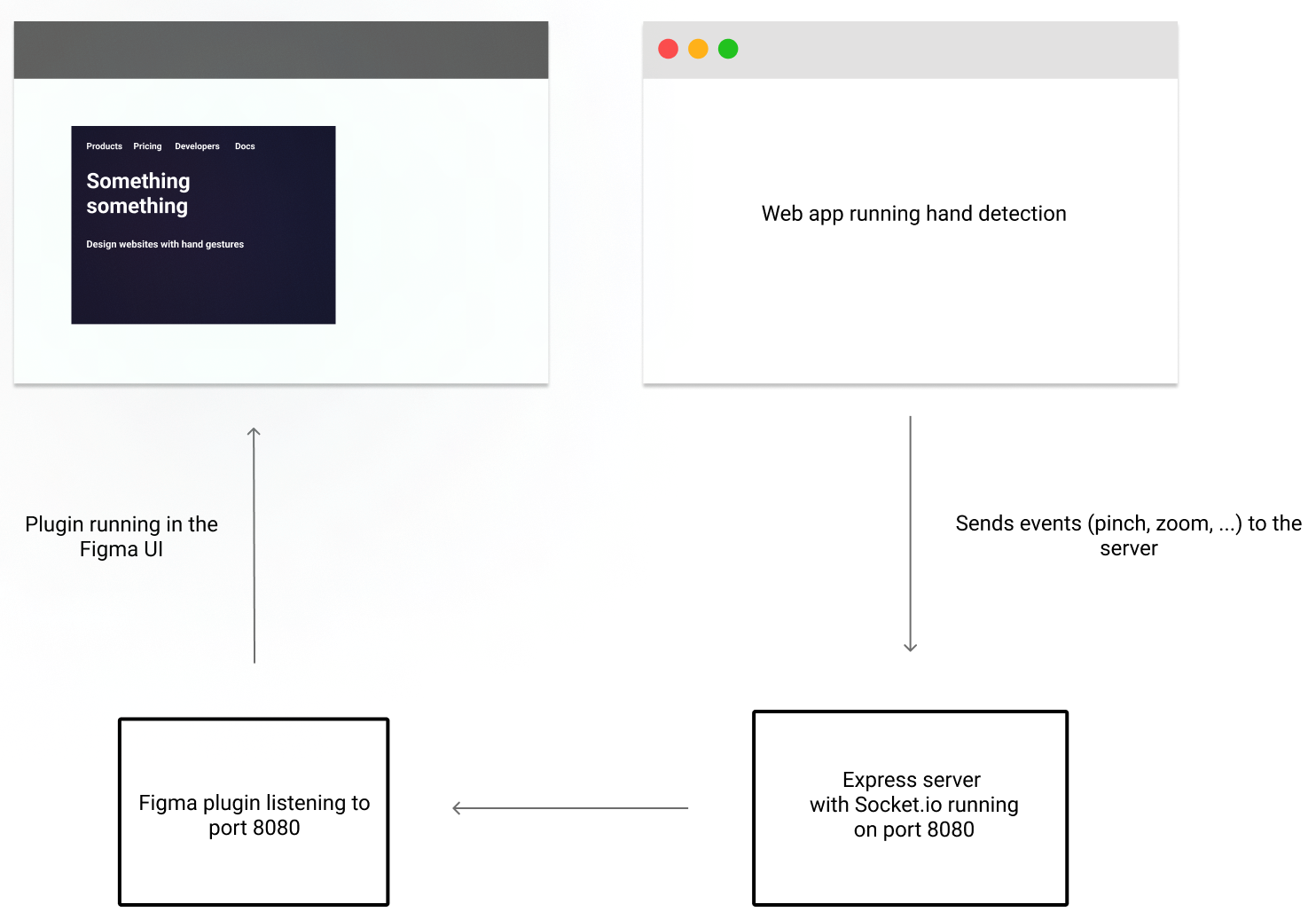

The way I went about it is using Socket.io to run a separate web app that handles the hand detection and send specific events to my Figma plugin via websockets.

Here's a quick visualization of the architecture:

Gesture detection with TensorFlow.js

In my separate web app, I am running TensorFlow.js and the hand pose detection model to get the coordinates of my hands and fingers on the screen and create some custom gestures.

Without going into too much details, here's a code sample for the "zoom" gesture:

let leftThumbTip,

rightThumbTip,

leftIndexTip,

rightIndexTip,

leftIndexFingerDip,

rightIndexFingerDip,

rightMiddleFingerDip,

rightRingFingerDip,

rightMiddleFingerTip,

leftMiddleFingerTip,

leftMiddleFingerDip,

leftRingFingerTip,

leftRingFingerDip,

rightRingFingerTip;

if (hands && hands.length > 0) {

hands.map((hand) => {

if (hand.handedness === "Left") {

//---------------

// DETECT PALM

//---------------

leftMiddleFingerTip = hand.keypoints.find(

(p) => p.name === "middle_finger_tip"

);

leftRingFingerTip = hand.keypoints.find(

(p) => p.name === "ring_finger_tip"

);

leftIndexFingerDip = hand.keypoints.find(

(p) => p.name === "index_finger_dip"

);

leftMiddleFingerDip = hand.keypoints.find(

(p) => p.name === "middle_finger_dip"

);

leftRingFingerDip = hand.keypoints.find(

(p) => p.name === "ring_finger_dip"

);

if (

leftIndexTip.y < leftIndexFingerDip.y &&

leftMiddleFingerTip.y < leftMiddleFingerDip.y &&

leftRingFingerTip.y < leftRingFingerDip.y

) {

palmLeft = true;

} else {

palmLeft = false;

}

} else {

//---------------

// DETECT PALM

//---------------

rightMiddleFingerTip = hand.keypoints.find(

(p) => p.name === "middle_finger_tip"

);

rightRingFingerTip = hand.keypoints.find(

(p) => p.name === "ring_finger_tip"

);

rightIndexFingerDip = hand.keypoints.find(

(p) => p.name === "index_finger_dip"

);

rightMiddleFingerDip = hand.keypoints.find(

(p) => p.name === "middle_finger_dip"

);

rightRingFingerDip = hand.keypoints.find(

(p) => p.name === "ring_finger_dip"

);

if (

rightIndexTip.y < rightIndexFingerDip.y &&

rightMiddleFingerTip.y < rightMiddleFingerDip.y &&

rightRingFingerTip.y < rightRingFingerDip.y

) {

palmRight = true;

} else {

palmRight = false;

}

if (palmRight && palmLeft) {

// zoom

socket.emit("zoom", rightMiddleFingerTip.x - leftMiddleFingerTip.x);

}

}

});

}

}This code looks a bit messy but that's intended. The goal was to validate the hypothesis that this solution would work before spending some time improving it.

What I did in this sample was checking that the y coordinate of the tips of my index, middle finger and ring finger was smaller than the y coordinate of their dip cause it would mean my fingers are straight so I'm doing some kind of "palm" gesture. Once it is detected, I'm emitting a "zoom" event and sending the difference in x coordinate between my right middle finger and left middle finger to represent some kind of width.

Express server with socket.io

The server side uses express to serve my front-end files and socket.io to receive and emit messages.

Here's a code sample of the server listening for the zoom event and emitting it to other applications.

const express = require("express");

const app = express();

const http = require("http");

const server = http.createServer(app);

const { Server } = require("socket.io");

const io = new Server(server);

app.use("/", express.static("public"));

io.on("connection", (socket) => {

console.log("a user connected");

socket.on("zoom", (e) => {

io.emit("zoom", e);

});

});

server.listen(8080, () => {

console.log("listening on *:8080");

});Figma plugin

On the Figma side, there's two parts. A ui.html file is usually responsible for showing the UI of the plugin and a code.js file is reponsible for the logic.

My html file starts the socket connection by listening to the same port as the one used in my Express server and sends the events to my JavaScript file.

For example, here's a sample to implement the "Zoom" functionality:

In ui.html:

<script src="https://cdnjs.cloudflare.com/ajax/libs/socket.io/4.4.1/socket.io.js"></script>

<script>

var socket = io("ws://localhost:8080", { transports: ["websocket"] });

</script>

<script>

// Zoom zoom

socket.on("zoom", (msg) => {

parent.postMessage({ pluginMessage: { type: "zoom", msg } }, "*");

});

</script>In code.js:

figma.showUI(__html__);

figma.ui.hide();

figma.ui.onmessage = (msg) => {

// Messages sent from ui.html

if (msg.type === "zoom") {

const normalizedZoom = normalize(msg.msg, 1200, 0);

figma.viewport.zoom = normalizedZoom;

}

};

const normalize = (val, max, min) =>

Math.max(0, Math.min(1, (val - min) / (max - min)));According to the Figma docs, the zoom level needs to be a number between 0 and 1, so I am normalizing the coordinates I get from the hand detection app to be a value between 0 and 1.

So as I move my hands closer or further apart, I am zooming in or out on the design.

It's a pretty quick walkthrough but from there, any custom gesture from the frontend can be sent to Figma and used to trigger layers, create shapes, change colors, etc!

Having to run a separate app to be able to do this is not optimal but I doubt Figma will ever enable access to the getUserMedia Web API in a plugin so in the meantime, that was an interesting workaround to figure out!