Toggle dark/light mode by clapping your hands

14/04/2021A few days ago, we released dark mode in beta on the Netlify app. While we're still iterating on it, it is currently only available via Netlify labs and then under the user's settings.

Even though it is not that many clicks to get there, it felt like too many to me and while waiting to have it easily accessible in the nav, I wondered... Wouldn't it be fun to be able to toggle it by clapping your hands, just like some home automation devices?

So I spent a part of the weekend looking into how to build it, and ended up with a Chrome extension using TensorFlow.js and a model trained with samples of me clapping my hands, that toggles dark mode on/off!

Here is the result:

FUN!!! 😃 Or at least, to me...

So, here is how I went about it.

Training the model

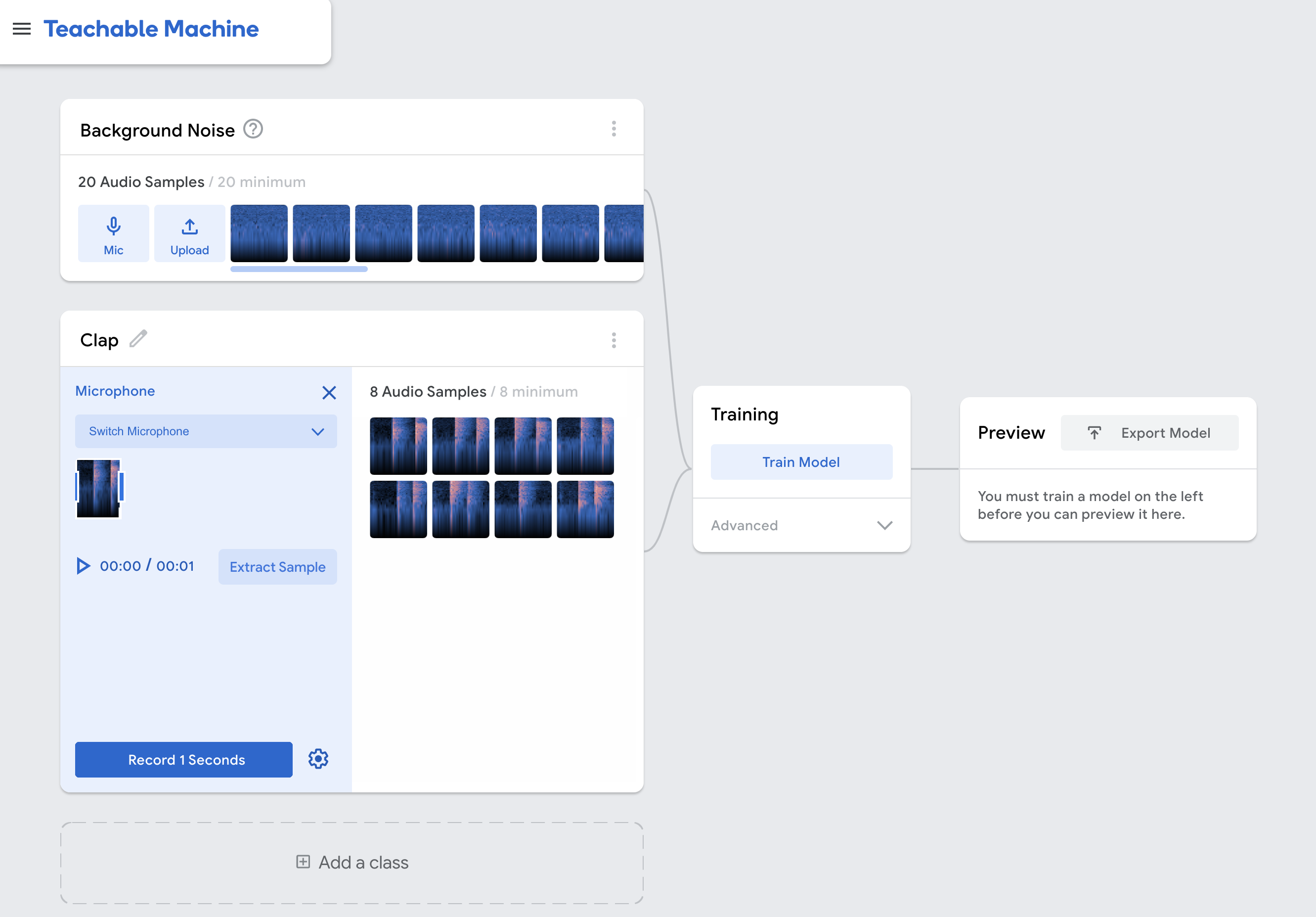

First, I trained the model to make sure detecting sounds of clapping hands would work. To make it way easier and faster, I used the Teachable machine platform, and more specifically, started an audio project.

The first section is to record what your current background sounds like. Then, you can create multiple "classes" to record samples of sounds for activities you would like to use in your project, in this case, clapping hands.

In general, I record samples for a few more classes, if I know that I'm gonna use this model in an environment where there will be more kinds of sounds.

For example, I also recorded samples of speech so the model would be able to recognise what speech "looks like" and not mistake it for clapping hands.

Once you're done recording your samples, you can move on to training your model, preview that it works as expected and export it to use it in your code.

Building the Chrome extension

I'm not gonna go into too much detail about how to build a Chrome extension because there are a lot of different options, but here's what I did for mine.

You need at least a manifest.json file that will contain details about your extension.

{

"name": "Dark mode clap extension",

"description": "Toggle dark mode by clapping your hands!",

"version": "1.0",

"manifest_version": 3,

"permissions": ["storage", "activeTab"],

"action": {

"default_icon": {

"16": "/images/dark_mode16.png",

"32": "/images/dark_mode32.png",

"48": "/images/dark_mode48.png",

"128": "/images/dark_mode128.png"

}

},

"icons": {

"16": "/images/dark_mode16.png",

"32": "/images/dark_mode32.png",

"48": "/images/dark_mode48.png",

"128": "/images/dark_mode128.png"

},

"content_scripts": [

{

"js": ["content.js"],

"matches": ["https://app.netlify.com/*"],

"all_frames": true

}

]

}The most important parts for this project is the permissions and content scripts.

Content script

Content scripts are files that run in the context of web pages, so they have access to the pages the browser is currently visiting.

Depending on your configs in the manifest.json file, this would trigger on any tab or only specific tabs. As I added the parameter "matches": ["https://app.netlify.com/*"],, this only triggers when I'm on the Netlify app.

Then, I can start triggering the code dedicated to the sound detection.

Setting up TensorFlow.js to detect sounds

When working with TensorFlow.js, I usually export the model as a file on my machine, but this time I decided to use the other option and upload it to Google Cloud. This way, it's accessible via a URL.

For the code sample, an example is provided on Teachable machine when you export your model but basically, you need to start by creating your model:

const URL = SPEECH_MODEL_TFHUB_URL; //URL of your model uploaded on Google Cloud.

const recognizer = await createModel();

async function createModel() {

const checkpointURL = URL + "model.json";

const metadataURL = URL + "metadata.json"; // model metadata

const recognizer = speechCommands.create(

"BROWSER_FFT",

undefined,

checkpointURL,

metadataURL

);

await recognizer.ensureModelLoaded();

return recognizer;

}And once it's done, you can start the live prediction:

const classLabels = recognizer.wordLabels(); // An array containing the classes trained. In my case ['Background noise', 'Clap', 'Speech']

recognizer.listen(

(result) => {

const scores = result.scores; // will be an array of floating-point numbers between 0 and 1 representing the probability for each class

const predictionIndex = scores.indexOf(Math.max(...scores)); // get the max value in the array because it represents the highest probability

const prediction = classLabels[predictionIndex]; // Look for this value in the array of trained classes

console.log(prediction);

},

{

includeSpectrogram: false,

probabilityThreshold: 0.75,

invokeCallbackOnNoiseAndUnknown: true,

overlapFactor: 0.5,

}

);If everything works well, when this code runs, it should log either "Background noise", "Clap" or "Speech" based on what is predicted from live audio data.

Now, to toggle Netlify's dark mode, I replaced the console.log statement with some small logic. The way dark mode is currently implemented is by adding a tw-dark class on the body.

if (prediction === "Clap") {

if (document.body.classList.contains("tw-dark")) {

document.body.classList.remove("tw-dark");

localStorage.setItem("nf-theme", "light");

} else {

document.body.classList.add("tw-dark");

localStorage.setItem("nf-theme", "dark");

}

}I also update the value in localStorage so it is persisted.

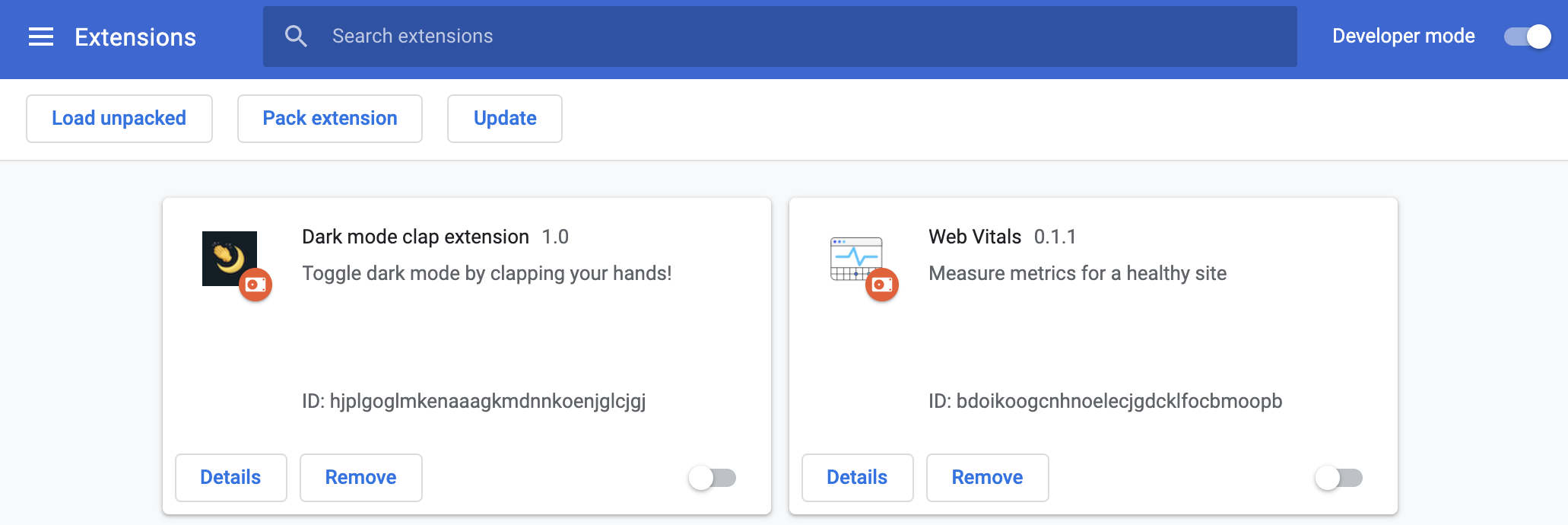

Install the extension

To be able to test that this code works, you have to install the extension in your browser.

(Before doing so, you might have to bundle your extension, depending on what tools you used.)

To install it, the steps to follow are:

- Visit

chrome://extensions - Toggle

Developer modelocated at the top right of the page - Click on

Load unpackedand select the folder with your bundled extension

If all goes well, you should visit whatever page you want to run your extension on and it should ask for microphone permission to be able to detect live audio, and start predicting!

--

That's it! Overall, this project wasn't even really about toggling dark mode but I've wanted to learn about using TensorFlow.js in a Chrome extension for a while so this seemed like the perfect opportunity! 😃

If you want to check out the code, here's the repo!

I can now tick that off my never-ending list. ✅